Update on the Review Process

ACE will release updated recommendations on May 14. We have been working on the process since January; this post will cover some general observations that we have made, along with things that we have learned. We are unable to mention specifics at this time, as we are currently requesting permission to publish various materials from organizations that made it to our medium review round. However, we can provide some more generalized information about what we have found thus far.

We began our process by compiling a list of U.S. animal organizations for review. Using heuristics based on what we see as most promising, and in accordance with our philosophy, we reduced the field to only examine farm animal charities. One of the most important factors in this decision can be seen in our donation distribution chart, which notes that although the vast majority of animals being killed in the US come from farms, farm animal advocacy is a very underfunded area. We listed the most notable groups and also contacted ten leaders of advocacy organizations to ask for their recommendations. As a result of these efforts, we reviewed 29 U.S. farm animal organizations.

Our plan was to conduct a shallow review of each of these 29 organizations using publicly available information. We looked through their websites, recorded their reported 990 forms and financial information, and sometimes factored in external resources if needed. We tried to evaluate organizations solely on these resources at this stage; in order to maintain a level playing field, we didn’t contact and request information for things that we could not find; instead we stuck to a very basic level of examination, as we anticipated this would be adequate in reducing the number of organizations that we would look at in subsequent rounds given the small starting number of considered organizations.

We were surprised at how often we found it difficult to determine exactly what an organization does based off of their website, and also the frequency that we found websites to be obviously outdated. Some listed their mission, but did not mention the things that they engaged in to accomplish their mission. Others listed their efforts, but failed to explain what they were ultimately trying to do with their work. Some provided that information, but it was hidden in a sea of other information.

We created a document for every organization, and answered as many questions as we could based on our criteria. We were then able to get a more accurate picture of each organization, and through internal discussion (between myself and Allison, our Director of Research), we came to a consensus that seven organizations seemed to have the most potential based on our criteria. We wrote up summaries for each of the other 22 organizations, and proceeded to a medium review round for the other seven. For these shallow review summaries, we presented them to each respective organization, and asked for them to approve or suggest edits to our reviews, and then for permission to publish the summaries on our site. Some organizations agreed with our review, some suggested edits we agreed with, and some suggested edits that we didn’t agree with. Some declined to be reviewed and asked that we not publish their information on our site, and some never responded to our initial contact request. As such, we will publish as much information as we can on May 14 along with our recommendations.

The medium review round began with Allison and I scheduling a call with a key representative from each organization in which we discussed a wide array of areas related to our criteria. After assembling a summary of this information (again, of which we will publish as much as we are allowed to publish), we asked some follow-up questions in an email that dealt with more specific information, such as a detailed division of resource expenditures in their budget. We continued to have a conversation with each of these groups to attain whatever additional resources we felt that we needed to make a reasonable judgment.

We took a chance moving one of these seven groups to the medium round, as we felt that they had some innovative ideas that could potentially have large impact if implemented and refined. We weren’t able to learn as much as we would have liked by simply reviewing their site, so we included them in the next round as a high risk/high reward candidate. Upon speaking with leadership, they declined to be reviewed this year, as they felt they were too new at this time to be considered an effective organization. We instead wrote a shallow review of this organization.

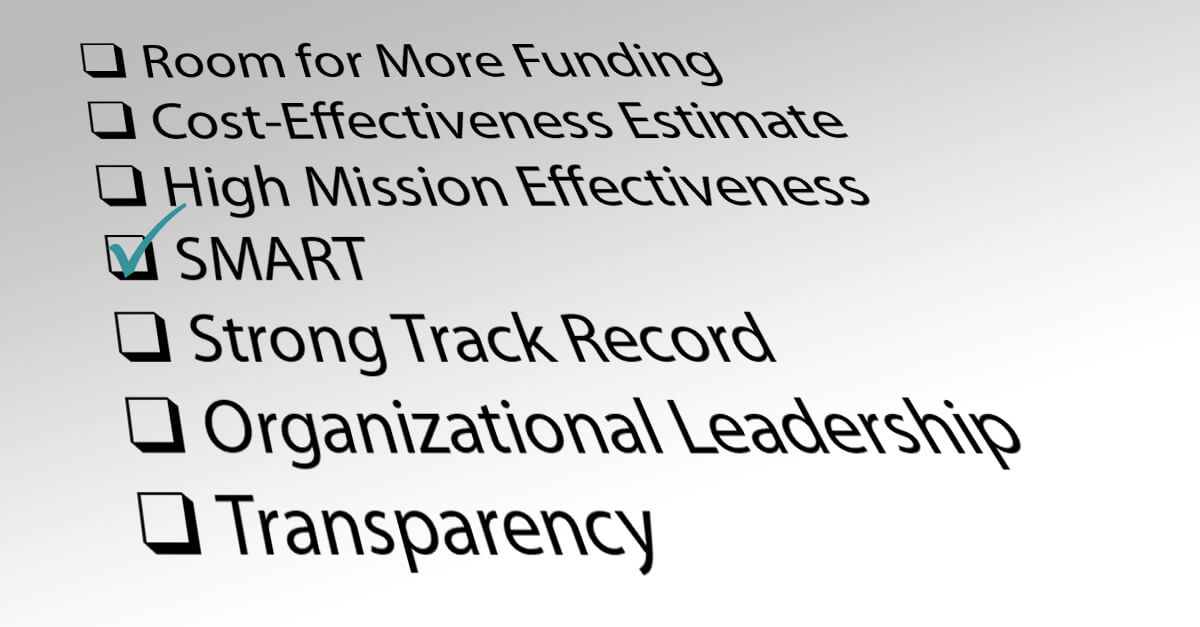

Throughout this medium review process, Allison and I scheduled several long calls in which we went over the details provided by each organization in more detail. We don’t yet have permission to publish much of this, but while I cannot address specifics in this post, I can say that we were mostly pleased with the cooperation of these organizations that were part of the medium review round. We were able to gain a fairly intimate understanding of their work and how they spent their money. We learned valuable information about each organization, such as how open they are to change based on new evidence, how much room for funding they have, and roughly how cost-effective their programs are.

ACE will come to a conclusion on our recommendations in the coming week, and will work on updating our site for publication of the new recommendations on May 14. We have learned a great deal of information through this process, and look forward to sharing as much information as we can with our followers. We’ve also recently discovered a resource which will help us map the animal advocacy organization landscape across the world, something which will greatly aid our efforts in considering international advocacy organizations in the future. However, our ability to examine a larger number of organizations is contingent on reaching our own funding needs; if you appreciate our mission, please consider supporting our work today.

About Jon Bockman

Jon has held diverse leadership positions in nonprofit animal advocacy over the past decade. His career prior to ACE included serving as a Director at a shelter and wildlife rehabilitation center, as a humane investigator, and as a Founder of a 501(c)3 farm animal advocacy group.

ACE is dedicated to creating a world where all animals can thrive, regardless of their species. We take the guesswork out of supporting animal advocacy by directing funds toward the most impactful charities and programs, based on evidence and research.

Join our newsletter

Leave a ReplyCancel reply

Comments

I am extremely disheartened to see that your top charity recommendations are all organizations which support the concept of welfare and do not work towards abolition of animal slavery. I do not believe that baby steps will ever result in the status of animals actually changing ever Your evaluations do not work for me at all. I will need to donate according to my own moral criteria.

“all organizations which support the concept of welfare and do not work towards abolition of animal slavery”

This is simply untrue. All of the major veg promotion charities in the US spend nearly all their resources on promoting vegetarianism/veganism/ending animal slavery, with a small amount going to welfare reforms. The evidence is strong that hardline approaches are ineffective. I would recommend Nick Cooney’s book, Veganomics, or any videos or podcasts of his on the internet for further info on the subject. I just listed to ARZone’s podcast interview with him yesterday: it was eye opening. On matters as important as animal liberation, it’s crucial to base our views/actions on evidence, rather than just our gut. You can always make targeted donations to animal charities. I have mixed feelings about certain aspects of veg charities myself, but I get around them by making targeted donations to the programs I feel most confident about.

Hi Linda, we don’t believe that supporting the concept of welfare is mutually exclusive to working toward abolishing animal slavery. Throughout our full reviews, you’ll see that we acknowledge that different approaches are necessary to change social perceptions of animals; we believe that the strategies employed by THL and MFA seem particularly promising. If you disagree with our conclusions, you can still use all of our reasoning that we publish in our reviews to make an informed decision on where to donate.

To provide a little more clarification, our top recommendations THL and MFA work toward animal liberation and don’t think that any animals should be used, which is the same goal that abolitionists work towards. However, their tactic is to support incremental changes, which is why for example they supported the “egg bill” where the US was considering federal legislation to mandate bigger cages for chickens. Abolitionists opposed the bill as it didn’t outlaw the raising of chickens for food but just changed their living conditions. So, the difference between your stance, Linda, and that of our top recommendations, appears to be on the level of which tactic works.

We don’t claim to have a final answer to the debate about which tactic works best between advocating “abolition” vs “incremental steps as progress,” which is why we write in our strategic plan that we strive to find recommendations that “that could be supported by abolitionists, welfarists, and negative leaning utilitarians.” As shown by your comment, we haven’t achieved this (and it might not be possible to really achieve in practice), but at least we feel that the goals of our top recommendations can be supported by each of these three parts of the animal movement.

We’re happy to hear your thoughts on this or receive evidence or arguments showing that one should advocate only for complete abolition of animal use instead of incremental steps, should you wish to offer them. You may reply here or contact me directly by email.