The Process Leading to our 2018 Recommendations

This post provides a brief discussion of the specific process that led to our 2018 recommendation update. For a more detailed description of the process, please see our 2018 Evaluation Process page. For a general description of our evaluation process, please read about our General Evaluation Process.

Overview

We made some updates to our charity evaluation process this year, including engaging with consultants for each of our seven evaluation criteria, providing each charity with an evaluation handbook, and asking them to distribute our new organizational culture survey to all staff and key volunteers, the results of which were sent directly to us.

Our formal evaluation process took place from June through November. The general timeline of our evaluation process was as follows:

- We selected charities to review and sent out invitations to participate along with the charity evaluation handbook in early July.

- We began gathering information and conducting research for comprehensive reviews in July.

- We completed full drafts of our comprehensive reviews in September.

- In September, we solicited feedback from our board, our Executive Director, our former Director of Research, and the criterion consultants, and worked to incorporate it into the initial drafts.

- We finalized our recommendation decisions in early October and communicated them to the charities under review between October 22 and October 24, 2018.

- We published our recommendations on November 26, 2018.

The process led to the publication of 11 new or updated comprehensive reviews, and an update to our recommendations.

Our Selection Process

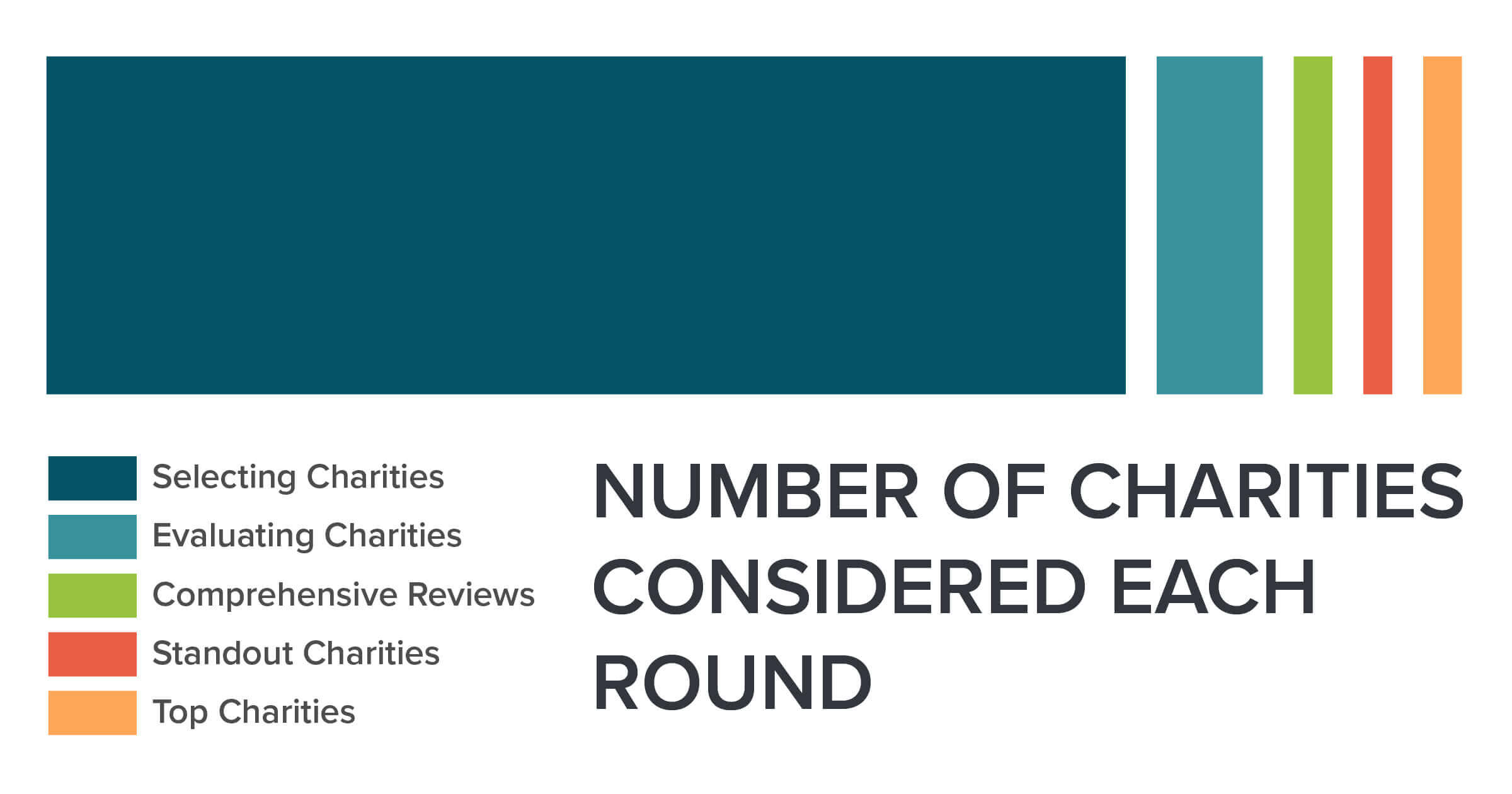

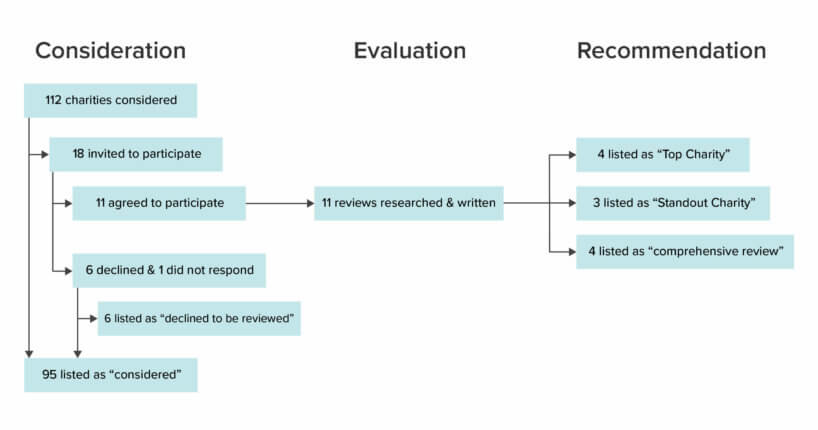

We began our 2018 evaluation process by compiling an internal list of charities to consider evaluating. At the end of this process, we had an internal list of 112 charities to consider evaluating in 2018. We then reviewed this list and attempted to identify the charities that seemed most valuable for us to evaluate.1 We initially selected a total of 15 charities for evaluation this year based on factors such as how likely we thought each of the charities was to be recommended and how useful we thought the knowledge we would acquire and potentially publish from the comprehensive evaluation would be. We then sent out our charity evaluation handbook to each of these 15 charities, and formally invited them to participate in the review process. Five of the 15 charities declined to be reviewed and one did not respond.2 We selected three more charities and invited them to participate. Two of these three accepted, and we ended up with a total of 11 charities to evaluate. This included all three of our Top Charities from 2017, four of our Standout Charities from 2016, and four charities that we had not previously reviewed.

Comprehensive Evaluations

At the start of the evaluation process, we asked each charity to provide documentation of their finances, accomplishments, and strategy, and we scheduled information-gathering interviews with their leadership. To assess workplace culture, we requested the contact information for all staff in order to conduct confidential calls and provided a survey for distribution amongst staff and volunteers.3 After conducting interviews and gathering requested documentation, we began drafting the reviews and sent follow-up questions.

We solicited feedback on initial drafts of our reviews from our criterion consultants, Executive Director, former Director of Research, Managing Director, Managing Editor, one Researcher from the experimental research division, and two Board Members.

After editing our comprehensive reviews and making our recommendation decisions (described below), we sent the reviews to the corresponding charities for approval, along with our conversation summaries and other supporting documents we intended to publish. Charities had the opportunity to request edits, including requests to remove confidential information or to correct factual errors. Nonetheless, all reviews represent our own understanding and opinions, which are not necessarily those of the charities reviewed. This year, all 11 of the charities for which we drafted comprehensive reviews agreed to have their reviews published.

Recommendations

After the majority of each comprehensive review was drafted, but before the reviews were entirely finished or sent to charities for approval, six members of the research team, our former Director of Research, our Executive Director, our Managing Director, and two Board Members met to discuss the selection of Top and Standout Charities. In preparation for this meeting, all participants indicated in a chart their individually prepared suggestions for Top Charities and Standout Charities. There was substantial initial agreement on the status of some charities, but not all. We discussed strengths and weaknesses of each charity and updated our votes in the chart when they changed following discussion. While the Board Members and former Director of Research provided points for discussion and their opinions on recommendation decisions, all decisions were made by votes from the research team, Managing Director, and Executive Director. At the conclusion of this first meeting, we had reached unanimous agreement on the recommendation status of three charities.

The same six research team members, Executive Director, and Managing Director met three more times over the next week and participated in several email threads to finalize our selection of 2018 Top and Standout Charities. We reached unanimous agreement around most of our recommendation decisions. For the remainder, decisions were made by a majority vote.

This year was the first time we selected four Top Charities rather than three. We think that, overall, each of our Top Charities perform outstandingly well on our criteria. They have clear plans for effectively using additional funding, strong organizational structures, and they’ve demonstrated an interest in and capacity for evaluating their own programs. There was unanimous or near unanimous consensus within the evaluation team that each of these charities should be selected as a Top Charity.

Our Standout Charities are those we didn’t select for a top recommendation, but nonetheless wanted to call to the attention of our readers because we think they are quite promising; we think that donations to these charities seem likely to have a relatively high expected value. We think some of these charities performed admirably on all our criteria, but not quite as well as our Top Charities. Other Standout Charities do especially well on only some of our criteria. For instance, they may work in a promising area, but lack a significant track record of impact. It took the most time and discussion to reach consensus on standout recommendation decisions when charities performed very well on some criteria and less well on others. In these cases we had numerous discussions in which the arguments for and against making a standout recommendation for the charities in question were presented. Once these points were established, we made the decisions according to a majority vote.

Important Considerations

A number of important questions arose in our discussions about which charities to recommend. We discussed the relative strengths and weaknesses of the charities we evaluated as well as Animal Charity Evaluators’ role in the animal advocacy movement.

Some of the questions we discussed are the same ones we tend to grapple with annually. For example:

- Is there an ideal number of Top and Standout Charities?

- How much money do we expect our recommendations to move to our Top Charities next year, and how does that affect the number of Top Charities we should select?

- How should we account for types of impact that are especially difficult to estimate? For instance, we have relatively little—or in some cases, no—evidence quantifying long-term impact and the impact of some relatively novel interventions.

Some other questions were more significant this year than in previous years. For example:

- Compared to farmed animal advocacy in the U.S. or Europe, how valuable is animal advocacy in other regions, such as Latin America?

- How should we weigh the anonymous non-leadership calls and the information from the culture surveys when evaluating an organization’s culture?

- Given our recent report on the allocation of resources within the movement, how should marginal funding be distributed among different types of outcomes?

- How should we weigh more direct indicators of marginal impact (such as intervention type or cost effectiveness estimates) against more indirect indicators (such as efforts to build diversity and inclusion within the movement) when the latter likely have significant, but more difficult to quantify impact?

We think that answering any of these questions well is very difficult, and that this activity is laden with uncertainty. Still, in line with our mission, we continually work to improve our understanding of effective animal advocacy.

For further information about our thinking, please watch our blog for upcoming posts on several topics, including aspects of our 2018 evaluation process that surprised us and a chart comparing our current Top and Standout Charities.

We identified charities that were in one or more of the following groups as valuable for us to evaluate:

- Top Charities from 2017 and Standout Charities from 2016

- Charities that seemed to be promising candidates for recommendation, including:

- Charities that we had flagged in previous rounds as strong candidates for an evaluation

- Charities doing unusual work or some type of animal advocacy related research that we thought might be promising

- Charities that seemed to be influential and plausibly cost-effective in countries outside the U.S.

- Charities that we knew had made significant progress in their work compared to the last time we evaluated them

- Large or well-known charities that we are often asked about but have not yet evaluated

- Charities that work in areas other than farmed animal advocacy that are plausibly cost-effective

New charities that might not have a long track record, but that we wanted to learn more about

Of the charities that declined to participate, most responded that they were too busy at the time and/or that they preferred to wait until 2019 because they expected their situation to be changing in the near future.

While we requested all charities send out our culture survey, we did not make participation in the review process contingent upon them doing this. Three charities requested to instead provide us with results of similar staff surveys they had recently conducted in lieu of administering our survey.

About ACE Team

Our compassionate team of researchers, communicators, advocates, and experts come from all over the world. We're united by our shared goal to reduce animal suffering and use evidence and reason to guide our efforts. Together, we write to reflect on our work, share what we're learning, and support a world where all animals can flourish.

ACE is dedicated to creating a world where all animals can thrive, regardless of their species. We take the guesswork out of supporting animal advocacy by directing funds toward the most impactful charities and programs, based on evidence and research.

Join our newsletter

Table of Contents