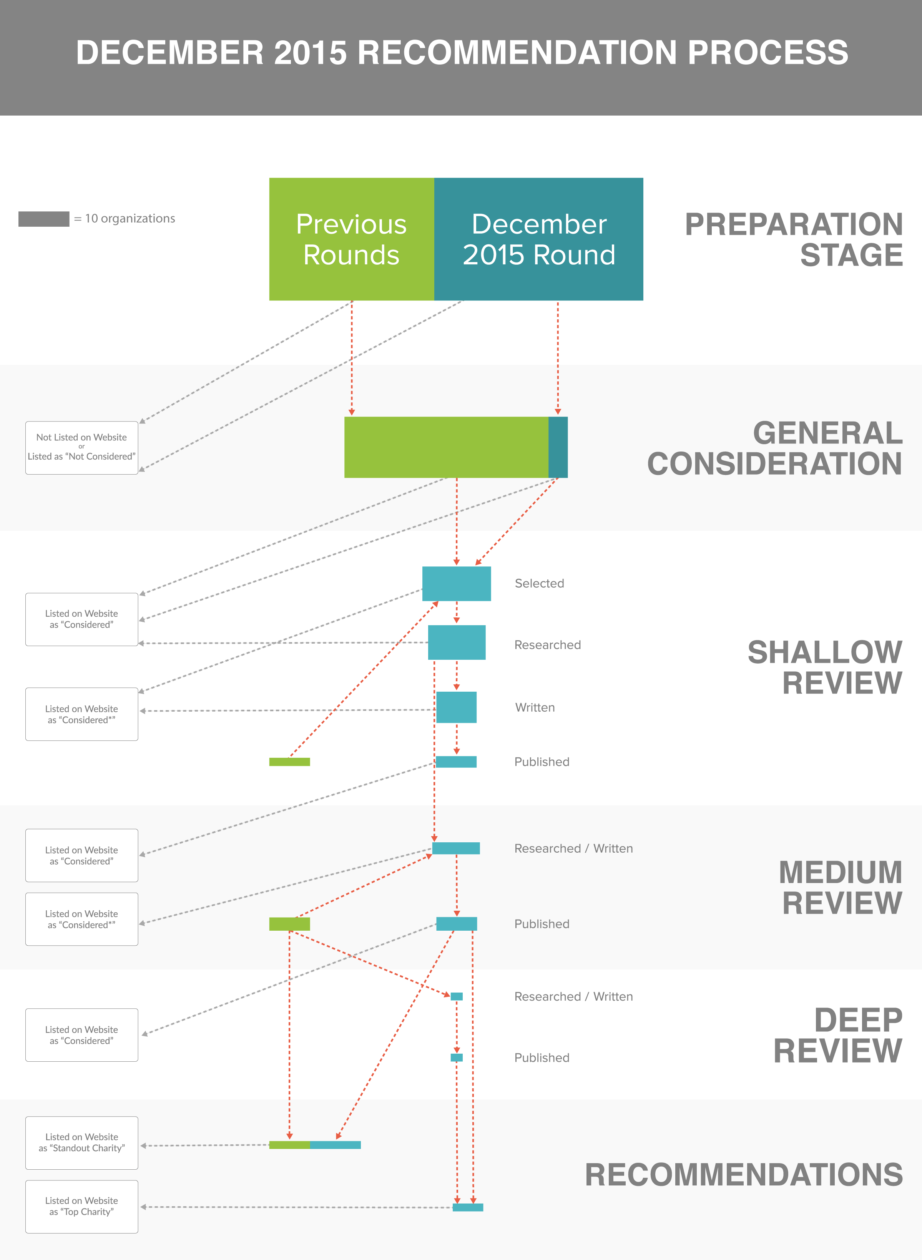

This page discusses the specific process that led to our December 2015 recommendation update; we discuss more general features of the process elsewhere.

Overview

We regularly conduct evaluations of charities at a variety of levels of detail and update our recommendations periodically as a result of these evaluations. Prior to December 2015, our most recent recommendation update took place in December 2014. During the early part of 2015 we focused our attention on tasks outside the organization review cycle, to allow time for changes in organizations to occur before we re-considered any groups we had previously worked with.

- We conducted the basic consideration phase of our review cycle in May.

- We conducted shallow reviews starting in June. Some communications regarding shallow reviews extended until November.

- We conducted medium reviews starting in July. All medium reviews were completed in late November.

- We conducted a deep review starting in June. It was completed in late November.

- We published our recommendations on December 1st, 2015.

Our research team did most of the work on this round of reviews, with help from the Executive Director throughout the process, interns in transcribing conversations and copy-editing materials, and the Director of Communications in publishing the results.

Basic Consideration

We considered organizations from several sources during this round. We had received requests to be considered from some organizations, and considered all these groups for a review or recommendation. We also considered some groups that third parties had recommended that we evaluate. Additionally, we used a list of 316 animal advocacy organizations operating in China that another animal advocate had provided us, from which we selected those that seemed like possible candidates for our reviews. Finally, we reconsidered a number of groups which we had considered in the past. These were groups that in earlier rounds had been close to the threshold for some further consideration but had been excluded for time reasons, or that we wanted to reconsider for some particular reason having to do with changes in their programming or in our understanding of their activities. We also decided to update our top charity reviews to ensure that we had the latest understanding of their work.

Our internal master list at the end of this process included 268 organizations and websites, 15 more than the 253 organizations and sites we listed internally at the end of the previous review process.1 We added all of these to the 156 previously included on our published list of organizations, bringing the total number on that list to 170 (as one organization subsequently requested not to be listed on our site). All of these organizations were added to the “Charities Considered” section on the list, or (based on later review) to our standout charities list.

In 2014 we added many organizations to the internal but not the external list, because each of those organizations either didn’t have an English webpage (so we couldn’t evaluate them), the webpage we found did not seem to belong to an active organization, or the organization primarily performed direct animal care. We chose not to take this option for any groups in 2015. In particular, we did not add most of the groups active in China to our public list for the same reasons (listed above) we used in 2014. However, since we already have a list of these organizations, we didn’t feel a need to transfer them all to our master list at this time, but we may do so in the future. If we were to merge this list with our current internal master list, we expect the resulting list would have 579 entries. Other than the list of groups added in China, we didn’t consider any organizations that we didn’t add to the published list. Some of these groups primarily engage in direct animal care; since they specifically requested to be considered, we added them to our list even though we might otherwise not have done so.

Shallow Reviews

We set a target of conducting around 30 shallow reviews during this round, including any shallow reviews that were updates to shallow reviews we had conducted for previous rounds.

Selecting organizations to review

We compiled a list of possible organizations to review from the organizations considered in the previous round. We included on this list:

- All groups that had specifically requested to be considered, or which a third party had asked us to consider.

- All groups that in previous rounds we had flagged as strong candidates for a medium or shallow review, but not reviewed at that depth.

- All groups from our published list of organizations (as of the end of the previous review cycle) that were marked as working on farm animal issues and which we had not yet reviewed.

- Several groups doing unusual work or some type of animal advocacy related research that we thought might be promising.

- Several groups that we knew had made some significant progress in their work compared to the last time we considered them, or which had asked us to reconsider them again in 2015, when they expected to be more ready for evaluation.

- The organizations active in China that had a reasonable amount of English content on their websites and appeared to do some work on farm animal issues.

- A few organizations that had not wanted to participate in our first review process in early 2014, that we receive questions about occasionally.

At this point we had 58 organizations on our list; some fit more than one of the descriptions above. 49 were organizations we had not previously reviewed. We narrowed the list by considering individually how promising we thought each group was as a recommendation candidate. Our research team staff each considered each group, and had two conversations about which to move forward with for review. The Executive Director gave additional feedback for groups on which our decision was unclear. Ultimately, those we chose to review included all the groups we had thought were doing unusual or promising work, and a few groups from each other category.

At this point, we had 30 organizations on our list for shallow reviews, four of which were updates to previous reviews.

Conducting reviews

We conducted reviews for each of these organizations based on their website and other publicly available information. Although we do not generally contact organizations during the early part of the shallow review process, we consider basic financial information such as assets, revenues, and expenditures for recent years crucial to the formation of an accurate review. Nonprofits registered in the United States, and in some other countries, submit standardized financial information to the government, and in some countries this information becomes easily available to the public through the government or through third parties such as Guidestar. However, we were not able to find this information for all organizations, either through their websites or through standardized sources (which did not seem to exist or provide the same information for all countries). Early in the review process, we contacted the 7 organizations for which we could not find basic financial information to request it. Of these, 2 replied with enough information that we could proceed and were treated as the other groups we were reviewing from this point forward. The other 5 never responded to our contact, and we marked them as “declined to be reviewed” without proceeding further in the review process.

Before writing the shallow reviews, we selected 7 of the shallowly-reviewed organizations to proceed to the medium review process. We did not write shallow reviews for these organizations, or for organizations we had previously written shallow reviews for which we found to be still substantially accurate. We wrote a total of 16 shallow reviews. We then sent these to the organizations involved for corrections and approval. Ultimately, 6 of these groups allowed us to publish versions of our reviews. Reviews that we altered after communicating with the organization involved still represent our own understanding and opinions, which are not necessarily those of the group reviewed. The other 10 groups either requested not to be reviewed or did not reply to our attempts to contact them; not all had seen the text of our review when they made that decision. We marked all of these groups as “declined to be reviewed” except one that asked we not mention their name on our website.

Medium Reviews

We set a target of conducting 6 new medium reviews, and updating the reviews for our 3 top charities from 2014. We considered also updating the reviews for our standout charities or other organizations on which we had conducted medium reviews, but decided that, based on the results of updating May 2014 reviews for December 2014, we did not expect another revision in such a short time period to be valuable. For future rounds, we will be asking previously-reviewed organizations other than our top charities to let us know in advance if they have developments they think might substantially affect our reviews, in addition to updating all reviews a minimum of once every three years. We plan to continue updating top charity reviews yearly, because we feel responsible for providing current information about groups we recommend donating to.

Selecting organizations to review

To select new charities on which to conduct medium reviews, after researching the shallow reviews but before writing them, we had a meeting. Our Research Associate and Research Manager individually composed private lists of organizations for possible review, indicating how strongly we felt that each group we listed should be reviewed. The two lists overlapped heavily; in total we listed 14 organizations, 8 of which appeared on both our lists.

We discussed every organization on each list and decided on 6 organizations to approach regarding a medium review, as well as 2 alternates in case some organizations did not want to participate. We deliberately chose some organizations working on different projects than those of our then-recommended charities, in case this would provide an opportunity to make stronger recommendations than we already had. 5 of the 6 organizations we approached agreed to participate. We did not hear back from the sixth until after we had already filled their slot with the first group on our list of alternates. Despite the delay, they were interested in participating; since our schedule did not at that point leave time for conducting an additional medium review, we decided to consider evaluating them in 2016.

Conducting reviews

We conducted the new reviews according to our general process for medium reviews. Updates to existing reviews followed a similar process, except that our conversations focused more closely on specific areas of interest, rather than trying to get an overview of the whole organization’s activities.

We provided organizations with conversation summaries, reviews, and other documents as we decided what we wanted to publish about each group and what our recommendations would be. Five new organizations, and the three organizations whose reviews we updated, did allow us to publish reviews; for one remaining organization, there were some communication delays, but we expect to publish the review soon. Summaries of conversations we had with their staff are also available separately.

We were glad that all medium review organizations wanted us to publish the review we conducted of their work. This was not the case in December 2014. We think we selected organizations this year that were likely to allow us to publish, but this result might just be due to chance. Additionally, we named a large proportion of the medium review organizations as standout charities, which may have increased their willingness to work with us to publish the reviews.

Deep Review

We conducted our first deep review in 2015, as a trial of the process. Our intention was to see how much time it would take, whether we would learn information potentially relevant to our recommendations that we could not learn in a more efficient way, and whether the resulting review would be more informative and persuasive for donors than a medium review.

Selecting an organization to review

We chose to conduct this review on The Humane League, because:

- They were one of our top-recommended groups in 2014, so they had a strong chance of being chosen for a top recommendation in 2015. We like to have detailed reviews of as many groups as possible, but we think it’s most important to provide detailed reviews of our top-recommended groups and other groups near the top of our list, since those are the reviews we expect to be read most frequently, and since this best helps explain our selection process for readers.

- We had conducted two medium reviews of THL and had several other conversations and interactions with them. This meant we already knew a lot of the things about them that we would expect to learn about any group we worked with over time on other projects. If we learned new things during the deep review, we could be relatively sure they were things we would only learn through adding new steps to our review process.

- They had been very open and responsive during previous review processes and collaborations in general. We expected to ask a lot of the groups we worked with during the deep review, so we wanted to choose a group that would be able and willing to spend extra time with us on the project.

Conducting the review

We created a plan for the review, including a list of people and groups of people we would like to talk to. In June, we discussed the idea of conducting a review with David Coman-Hidy, THL’s Executive Director, and asked him to put us in touch with as many as possible of the people on the list. Over the next several months, he helped us speak with most of the people we wanted to talk to, including all the people we wanted to talk to who actually worked for THL. We also conducted four site visits at various THL offices and events. Our last interviews were at the beginning of October.

We wrote conversation summaries and notes on site visits throughout the process. We sent conversation summaries first to our conversation partner for approval, and then to David to approve on behalf of THL. Site visit notes we sent only to David, unless they also covered a conversation in detail, in which case we also sent them to the parties involved in the conversation. We talked to some people who did not work for THL, including donors, teachers whose classes participate in their humane education program, and collaborators from other advocacy organizations, and we did not seek to publish full summaries of all these conversations, in some cases preferring to ask less of our conversation partner’s time or to better protect their confidentiality. In total, we published notes on 10 conversations and site visits.

We wrote and published a review for THL that contained all the sections we typically include in a medium review, as well as additional sections concerning criticisms of the organization and the methodology of the review. We included them in our recommendation decision process along with the groups on which we had conducted medium reviews.

Recommendations

After the research on the medium and deep reviews was finished, but before the writing was finished, our Executive Director, Research Manager, and Research Associate each individually decided whether each of the charities we’d conducted a medium or deep review on should be a top recommendation, a standout charity, or neither, or if we were not certain. We compared lists and discussed each group. In some cases we were very certain and agreed with each other; in others we disagreed, or some of us were not sure what status was appropriate for a particular group.

After an extensive conversation on the subject, we had reached what turned out to be our final decision about each group. However, we continued to talk over the next few days to make sure we were all comfortable with the decisions, because our individual opinions had varied substantially and because we had selected a larger number of standout organizations than some of us expected. We’re limited in how much detail we can provide about this decision process, since most of it had to do with specific aspects of individual organizations. We knew how we planned to categorize each group before we reached out to any about publication of their review.

After this round, we had 3 top charities, the same top charities we had at the start of the round. We also had 9 standout organizations, including the 4 we had at the start of the round. We had decided during the course of the previous round that we did not want to place a cap on the number of standout charities; this number could continue to grow in following rounds. We wanted the number of top charities to remain small, so that our recommendations provide a clear call to action. We don’t expect the number of top charities to grow much in the future.

Additional Information

Updated Recommendations: December 2015

Archive: 2015 List of Considered Charities