This page contains a description of the specific process that led to our 2017 recommendation update; a more general description of our evaluation process is available elsewhere.

Table of Contents

- Overview

- Basic Consideration

- Exploratory Evaluations

- Comprehensive Evaluations

- Recommendations

- Additional Information

Overview

We periodically conduct evaluations of charities at various of levels of detail and update our recommendations as a result of those evaluations. Prior to November 2017, our most recent recommendation update took place in November 2016.

During the first few months of 2017, we made some updates to our charity evaluation process and conducted some of the foundational and intervention research that informs our reviews. Our intervention research for 2017 actually extended through our charity evaluation process and into December. Our formal evaluation process took place from May through November. The timing of some noteworthy events follows:

- We conducted the “basic consideration” phase of our review cycle in May.

- We began conducting exploratory reviews in June. Some communications regarding exploratory reviews extended into November.

- We began conducting comprehensive reviews in July. Drafts of our comprehensive reviews were completed in October.

- We finalized our recommendation decisions in late October and communicated them to the charities under review on October 25, 2017.

- We published our recommendations on November 27, 2017.

The members of our research team who are not primarily involved with the animal advocacy research fund or the experimental research division did most of the work on the reviews, with help from ACE’s Executive Director. A Research Intern helped complete cost-effectiveness estimates, a team of volunteers helped transcribe and summarize conversations, and an independent contractor helped copyedit comprehensive reviews. Our Executive Director, research scientist, research editor, and two Board Members provided feedback on a draft of the comprehensive reviews. Finally, our communications team, with the help of a Communications Intern and our Director of operations, published and announced the results.

Basic Consideration

We began our 2017 evaluation process by compiling an internal list of charities to consider evaluating. The list included:

- Charities that requested to be evaluated

- Charities that members of the ACE staff and board suggested evaluating

- Charities that third parties asked us to evaluate

- Charities that we had considered or evaluated in the past that:

- had been close to the threshold for further investigation but had been excluded for some reason

- we wanted to reconsider due to changes in their programming or our understanding of their activities, or

- we had last reviewed in 2014, as those reviews would expire in 2017

- International charities that we had not previously evaluated and that we understood to have significant influence in their home countries

- Charities that we had identified as potentially promising during a systematic search for charities in France, Germany, Italy, and Spain1

We also planned to reevaluate our 2016 Top Charities, as well any Standout Charities that had not been evaluated for more than a year to ensure that we had an up-to-date understanding of their work.

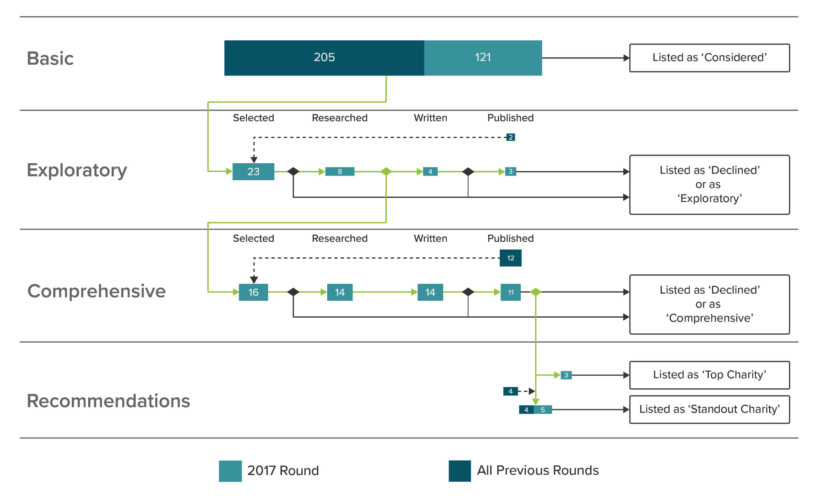

At the end of this process, we had a list of 629 charities to consider evaluating in 2017, 60 of which were groups that we had evaluated or considered before and 569 of which were groups that we had not. Our list of charities now includes 326 organizations and websites, up from the 205 organizations and sites we listed before the commencement of the 2017 review process. We chose not to include all of the charities we considered in this round on our published list because many of those charities were humane societies and/or companion animal shelters located in France, Germany, Italy, or Spain, and we thought there was limited value in adding those groups to our published list. We added 121 new groups to our list and updated our entries for a further 60 charities.

Exploratory Evaluations

Selecting Charities to Evaluate

We set a rough target of evaluating approximately 37–42 charities this year. That target included all exploratory evaluations, new comprehensive evaluations, and the reevaluation of our 2016 Top Charities and six of our 2016 Standout Charities that we had last published a review of in 2015. We then reviewed our list of 629 charities to consider for evaluation in 2017 and we worked to identify the charities that seemed most valuable for us to evaluate.

We identified charities that were in one or more of the following groups as valuable for us to evaluate:

- Charities that seemed to be promising candidates for recommendation, including:

- Charities that we had flagged in previous rounds as strong candidates for an evaluation

- Charities doing unusual work or some type of animal advocacy related research that we thought might be promising

- Charities that seemed to be influential and plausibly cost-effective in countries outside the U.S.

- Charities that we knew had made significant progress in their work compared to the last time we evaluated them

- Large or well-known charities that we are often asked about but have not yet evaluated

- Charities that work in areas other than farmed animal advocacy that are plausibly cost-effective

- New charities that might not have a long track record, but that we wanted to learn more about

In June 2017, one member of the research team excluded 499 groups from evaluation based mainly on a brief judgment from information gleaned from the group’s website about whether the group was currently active and whether they worked in a plausibly high-impact area. Three members of the ACE research team then individually considered whether or not we should evaluate each of the remaining 130 groups on the list. The three staff agreed on 35 charities to be selected for evaluation and 95 charities to be excluded from evaluation. Soon after that meeting, our Research Director sorted the 35 charities selected for evaluation such that all the charities who had previously received a comprehensive evaluation were slated to receive a new comprehensive evaluation and the remaining 23 charities were initially slated for an exploratory evaluation that could later be updated to a comprehensive evaluation.

Conducting Exploratory Evaluations

For each of our charities chosen for exploratory evaluation, we conducted basic background research that was relevant to our evaluation criteria. For instance, we considered basic financial information such as assets, revenues, and expenditures from recent years.2 During this background research, we relied upon the charities’ websites and other publicly available information.

Next, we contacted each charity to set up an interview with their leadership. In the time between this initial point of contact and when we began drafting exploratory reviews, 15 of the 23 charities selected for exploratory review either directly declined to be reviewed or didn’t respond to our emails in time for us to continue with the exploratory evaluation process.

We then attempted to set up calls with the eight remaining charities selected for exploratory review. Our Director of Research, Researcher, and Research Associates conducted the phone calls during our exploratory evaluation process. In one case, we weren’t able to arrange a call with the charity and instead exchanged emails with them in order to collect the equivalent information. We recorded all the calls for internal use but chose not to publish call summaries for those charities that were only subject to an exploratory review because we thought the added value of publishing call summaries wasn’t worth the time it would require to transcribe our conversations, copyedit the transcripts, and obtain charities’ approval of the summaries.

After conducting background research and phone calls (or equivalent) with each charity but before drafting exploratory reviews, four3 members of the research team collectively selected four of the eight charities under exploratory review for comprehensive review (see below). We did not write exploratory reviews for those four charities or for the 15 that had already declined to be reviewed.

We then wrote exploratory reviews for the four remaining charities that we had selected for exploratory review. We sent the reviews to the corresponding charities for corrections and approval. Ultimately, three charities allowed us to publish their exploratory reviews. All published reviews were slightly altered after we communicated with the charity, but they still represent our own understanding and opinions, which are not necessarily those of the charity reviewed.

Comprehensive Evaluations

Selecting Charities to Evaluate

In total, we selected 16 charities for comprehensive reviews this year. This included all three of our Top Charities from 2016, six of our Standout Charities from 2016, four charities that were initially selected for exploratory review in 2017, and three other charities that we had previously comprehensively reviewed.

To select which charities—of those that had originally been selected for exploratory review—would be chosen for a comprehensive review, four members of our research team met after completing the research for the exploratory reviews but before writing the exploratory reviews. In that meeting, the four staff collectively chose four charities to comprehensively evaluate. These charities were selected based on factors such as how likely we thought each of the charities was to be recommended and how useful we thought the knowledge we would acquire and potentially publish from the comprehensive evaluation would be. Any borderline cases were further discussed via email until all five members of our team reached a consensus.

Conducting Comprehensive Evaluations

We conducted evaluations according to our general process for comprehensive reviews. We contacted each charity with whom we had not yet spoken to set up an interview with their leadership. At this point, two of the 16 charities declined to be reviewed.

We sent some initial follow-up questions to each charity selected for comprehensive review after we had completed the leadership calls. After completing some of the calls, we began drafting comprehensive reviews and call summaries for each of the fourteen remaining charities and sent further follow-up questions to each of these charities. A board committee, our research scientist, and our Executive Director then read an early draft of our comprehensive reviews and provided feedback, both on our general evaluation process and on specific reviews. They also provided some initial thoughts about which charities should receive top or standout recommendations.

After editing our comprehensive reviews and making our recommendation decisions (described below), we sent the reviews to the corresponding charities for approval, along with our conversation summaries and other supporting documents we hoped to publish. All published comprehensive reviews were altered after we communicated with the charity, but they still represent our own understanding and opinions, which are not necessarily those of the charity reviewed. This year, three of the 14 charities for which we drafted comprehensive reviews declined to have their reviews published.

Recommendations

After the majority of each comprehensive review was drafted, but before the reviews were entirely finished or sent to charities for approval, five members of the research team and our Executive Director held two meetings and participated in several email threads to select our 2017 Top and Standout Charities.

In the first meeting, each of us shared lists we had prepared individually of our suggestions for Top Charities, Standout Charities, not recommended charities, and borderline cases. There was substantial agreement on the recommendation status of most charities and diverging opinions about the recommendation status of a few charities. After discussion, we agreed about the recommendation status of eleven charities. We continued to discuss the remaining three charities over the next several days, and then we held a second meeting to finalize our decisions. We will not provide more details about our decision process here, though most of it involved the specific aspects of individual organizations that we describe in our reviews. We reached an unanimous agreement around almost all of our recommendation decisions. For the remainder, there was very high—but not quite unanimous—agreement, and in those cases the final decisions were supported by strong consensuses.

Additional Information

The Process Leading to Our 2017 Recommendations (Blog Post)

Updated Recommendations: 2017 (Blog Post)

Archive: 2017 List of Considered Charities

Evaluation Process Archive

Based on a cursory analysis, we thought these countries were possibly impactful places to work and relatively likely to have some effective charities. We plan to consider systematically searching other countries next year.

Nonprofits registered in the United States, and in some other countries, submit standardized financial information to the government, and in some countries this information becomes easily available to the public through the government or through third parties such as Guidestar.

In July 2017, two staff joined the research team. Those additional members of the research team explain the increase in number here and elsewhere when compared to the number of staff involved in the process prior to July, 2017.