Process Leading to our 2020 Recommendations

This post describes the specific process that led to our 2020 charity recommendations.

Overview

Our evaluation process took place from June to November:

- Late June: Selected charities to invite to be reviewed

- Early July to early November: Invited charities to participate, gathered information from charities, drafted our comprehensive reviews, solicited feedback from ACE’s board and Executive Director

- Early November: Sent charities completed drafts of the reviews, finalized our recommendation decisions

- Mid November: Addressed charities’ feedback on our drafts, solicited charities’ approval to publish

- Late November: Published our recommendations on November 24, 2020

This process led to the publication of 12 new or updated comprehensive reviews, along with an update to our list of recommended charities.

Updates to Our Evaluation Process

We made several updates to our charity evaluation process this year. For example, we updated our menu of outcomes with the intention of better capturing and communicating both how the animal advocacy movement works as a whole and how individual charities operate within the movement. This update was reflected throughout the reviews, especially in our theory of change diagrams and our evaluation of charities’ programs and cost effectiveness.

ACE places high priority on using accurate and reliable evidence in our work. This year, we intensified our verification process and devoted a slightly larger proportion of our time to verifying claims reported by charities. To verify claims, we relied on publicly available information, internal documents, media reports, and independent sources, and we often followed up with charities for further information or details. For each charity, we verified at least one key result per program.

We’re always looking for ways to make our evaluation process run more smoothly and to allow our researchers more time for drafting the reviews and making recommendation decisions. This year, we’ve made a number of process improvements that we believe increased the efficiency of our work. Regarding interactions with charities, we asked the organizations fewer questions, so as to limit the burden placed on them, and we assigned a single person to handle communication with all charities. We also replaced the conversation calls with charities’ leadership by written questionnaires, relieving capacity by not having to produce call summaries. Internally, we implemented an agile operational model that allowed the evaluations team to cooperate more closely on drafting reviews and to adjust our process continuously.

Our Selection Process

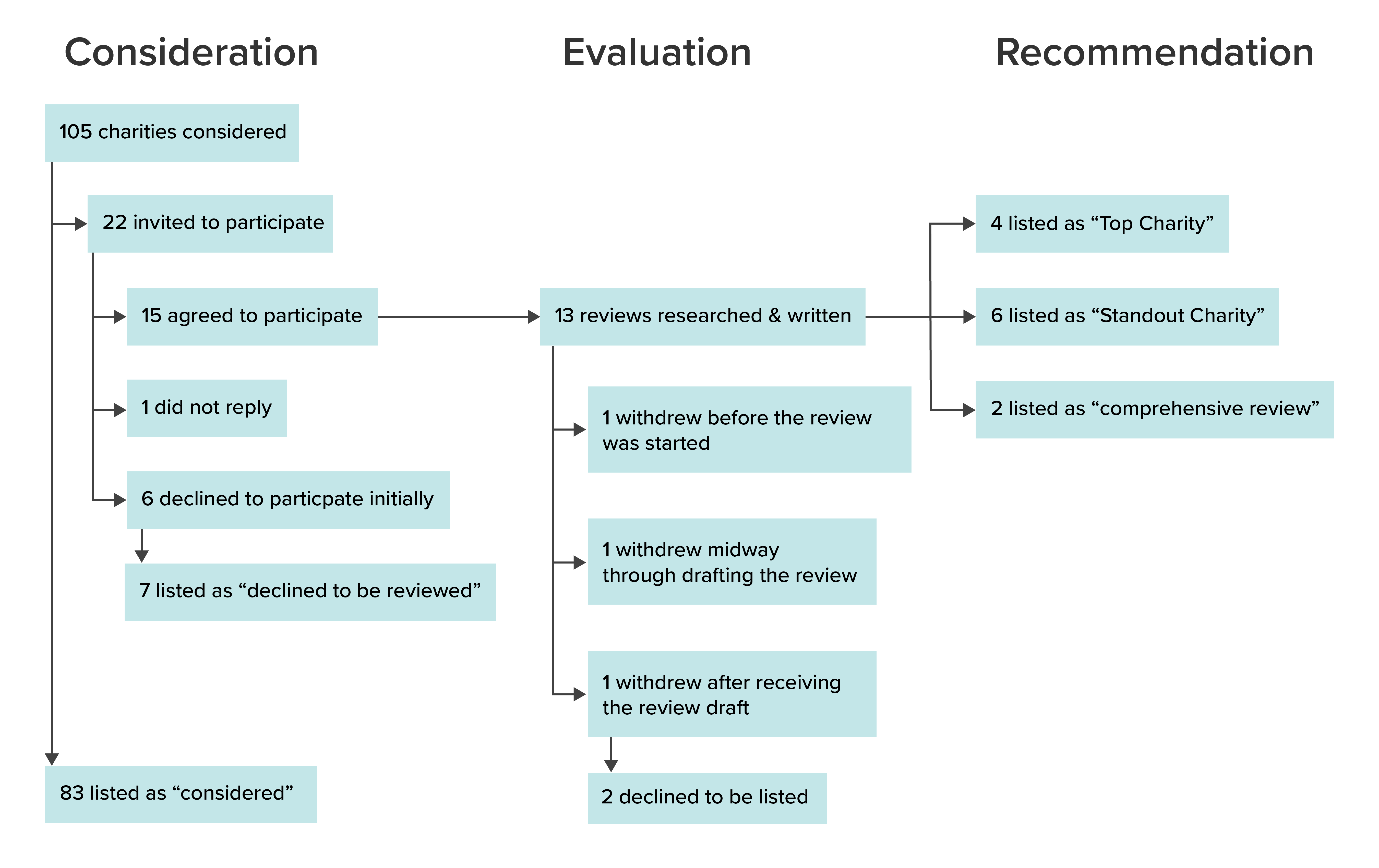

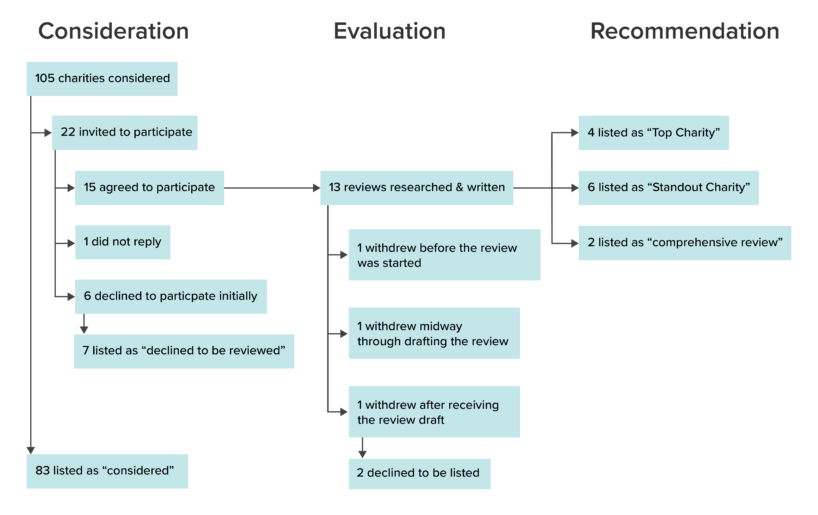

We began our evaluation process by compiling an internal list of 105 charities to consider, and then reviewed the list to identify the charities that seemed most valuable to spend time evaluating. We initially selected 22 charities for evaluation, based on a variety of factors—including how likely we thought each charity was to perform well on our evaluation criteria, and how useful we thought the knowledge we would acquire and potentially publish from the comprehensive evaluation would be.

We sent each of these 22 charities a copy of our Charity Evaluation Handbook and formally invited them to participate in the review process. Six of the 22 charities declined to be reviewed,1 and one charity did not respond to our request to be evaluated. We thus ended up with a total of 15 charities to evaluate: our four Top Charities from 2019, two Standout Charities we last evaluated in 2018, and nine other charities we had not evaluated in at least the past three years. One of these 15 charities withdrew before the review process started, one withdrew midway through the review process, and one withdrew after receiving the draft of their review—leaving us with a total of 12 evaluated charities.

Evaluations

As part of the evaluation process, we asked each charity to provide information and documentation about their ongoing programs, accomplishments, finances, and strategy. This year, we also asked charities about the effects of the COVID-19 pandemic on their work. To assess workplace culture, we distributed a survey to each charity’s staff.

While drafting the reviews, we solicited feedback on each criterion from ACE’s Executive Director and three board members. Once drafted, we sent the reviews to the charities for feedback and approval. Before approval, charities had the opportunity to request edits, including requests to remove confidential information or to correct factual errors. Nonetheless, all reviews represent our own understanding and opinions, which are not necessarily those of the charities reviewed. This year, 12 of 13 charities for which we drafted reviews agreed to have their reviews published.

Recommendations

After the comprehensive reviews were drafted, but before they were approved by charities, seven members of ACE’s team and ACE’s Executive Director held several meetings to discuss the selection of Top and Standout Charities. In preparation for these meetings, staff read and considered all information in each charity’s review, and provided initial impressions of recommendation decisions.2 We observed substantial initial agreement on the status of some charities, but not others. We subsequently discussed the strengths and weaknesses of each charity in-depth, candidly challenged each other’s assumptions and reasoning, and considered feedback and impressions from ACE’s board.

In the end, we selected four Top Charities. We think that, overall, each of our Top Charities performs well on our evaluation criteria. They each conduct effective programs, can make use of additional funding, and have a sustainable work culture. We had either consensus or a majority of supporting votes among the evaluation team that each of these four groups should be selected as Top Charities.

We also selected six Standout Charities. Our Standout Charities are those which we did not select for a top recommendation but nonetheless wanted to call to the attention of our readers because we think they are promising. We think that donations to these charities still seem likely to have a relatively high expected value. We had a majority of supporting votes among the evaluation team that each of these six groups should be selected as Standout Charities.

Participation Grants

We offer our sincere gratitude to all charities we evaluated this year. Participating in our process takes time and energy. We are grateful for organizations’ willingness to be open with us about their work. To that end, we awarded participation grants of $2,000 to those charities that we evaluated this year. These grants are not contingent on publication; we award grants to charities whose reviews we do not publish, assuming they made a good faith effort to engage with us during the review process.

Of the charities that declined the opportunity to participate, most responded that they were too busy at the time and or that they preferred to wait until 2021 to be evaluated.

The one exception is one member of the research team, who was not at all involved in the evaluation process for one charity due to a conflict of interest.

About ACE Team

Our compassionate team of researchers, communicators, advocates, and experts come from all over the world. We're united by our shared goal to reduce animal suffering and use evidence and reason to guide our efforts. Together, we write to reflect on our work, share what we're learning, and support a world where all animals can flourish.

ACE is dedicated to creating a world where all animals can thrive, regardless of their species. We take the guesswork out of supporting animal advocacy by directing funds toward the most impactful charities and programs, based on evidence and research.

Join our newsletter

Table of Contents