This blog post provides a brief discussion of the specific process that led to our 2016 recommendation update. For a more detailed description of the process, see our 2016 Evaluation Process page. For a general description of our evaluation process, read about our General Evaluation Process.

Overview

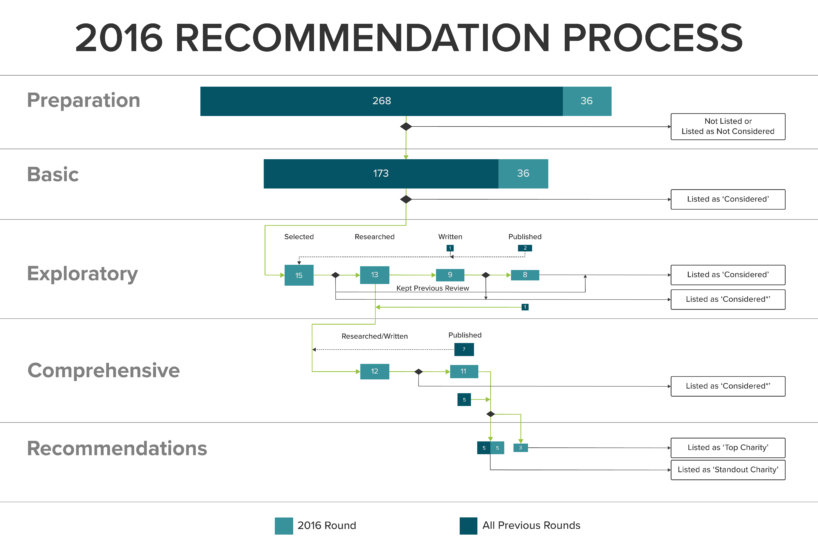

Our 2016 evaluation process took place from June through November, as follows:

- We conducted the “basic consideration” phase of our review cycle in June.

- We began conducting exploratory reviews in late June; some communications regarding exploratory reviews extended until November.

- We began conducting comprehensive reviews in September. Drafts of our comprehensive reviews were completed in early November.

- We finalized our recommendation decisions on November 4, 2016.

- We published our recommendations on November 28, 2016.

The process resulted in the publication of eight new exploratory reviews, 11 new or updated comprehensive reviews, and an update to our top and standout recommendations.

Basic Consideration

We began our 2016 evaluation process by compiling an internal list of charities to consider evaluating. At the end of this process, we had a list of 64 charities to consider evaluating in 2016. Thirty-six of them were groups we had never considered or evaluated before. Seven of them were Top or Standout Charities from 2015, which we planned to reevaluate in order to ensure that we had the most up-to-date understanding of their work.

Exploratory Evaluations

From our list of charities to consider, we selected a total of 20 charities to evaluate this year, including the seven Top and Standout Charities due for reevaluation. We also chose two charities to place on reserve in case some of the 20 did not wish to be evaluated, and we chose to conduct phone calls with three charities that we had previously evaluated, in order to decide whether to update their reviews.

This year, two of our selected charities declined to be reviewed and were replaced by the charities we had on reserve. We were pleased that such a high proportion of our selected charities were willing to work with us. This may be due to our growing reputation, our assurance that charities can veto the publication of our reviews, and our ability to influence increasingly larger amounts of money in donations to recommended organizations. We might also have chosen charities who were more likely to work with us. For example, this year, we evaluated fewer large charities that conduct general animal protection work. Instead, we focused on smaller charities that work in particularly promising areas of animal protection. Our reviews of charities that work in one promising area are likely to be more positive than our reviews of charities that work in many different areas, other things being equal—and charities may be more inclined to agree to the publication of more positive reviews. Moreover, smaller charities likely have more to gain from the exposure our review can provide.

After conducting background research and phone interviews with each charity, we selected 12 of them to proceed to the comprehensive review process. We did not write exploratory reviews for charities we would be evaluating comprehensively. We decided not to update our reviews of two of the three charities we were considering reevaluating, because our recent conversations with them did not significantly alter our understanding of their work.

We wrote a total of nine exploratory reviews and sent them to the corresponding charities for corrections and approval. Ultimately, eight charities allowed us to publish versions of our reviews. We were pleased that so many groups agreed to publication this year. In 2015, we drafted 16 exploratory1 reviews and we were able to publish just six of them.

The factors mentioned above as possible influences on charities’ willingness to work with us may have had a similar positive effect on the ratio of charities that agreed to publication. Additionally, this year was the first time we conducted phone calls with all charities we reviewed, which might have helped them feel more invested in the review process and satisfied with the results, or may have simply provided additional information that led to a more accurate review.

Comprehensive Evaluations

We comprehensively evaluated 12 charities in 2016. Of those, seven were our 2015 Top or Standout Charities due for reevaluation and one was a group for which we had written an exploratory review last year.

After conducting phone calls with the leaders of each charity, we drafted our comprehensive reviews and call summaries. This year, a board committee read an early draft of our reviews and provided feedback, both on our general evaluation process and on specific reviews. They also provided some initial thoughts about which charities we should recommend. We found it helpful to receive feedback from people who were not directly involved in researching and writing the reviews. Next year, we intend to schedule more time to implement changes based on the feedback from the board committee.

After editing our comprehensive reviews and making our recommendation decisions (described below), we sent the reviews to the corresponding charities for approval, along with our conversation summaries and other supporting documents we hoped to publish. This year, all but one group agreed to the publication of their comprehensive reviews.

Recommendations

Our research team and Executive Director held two meetings to select our 2016 Top and Standout Charities. In the first meeting, each of us shared lists we had prepared individually of our suggestions for Top Charities, Standout Charities, not recommended charities, and borderline cases. There were some charities about which everyone agreed and others about which we had more diverging opinions. We will provide limited detail about our decision process here, since most of it involved the specific aspects of individual organizations that we describe in our reviews. After extensive discussion, we agreed about the status of eight charities. We continued to discuss the remaining four charities over the next several days, and then we held a second meeting to finalize our decisions.

Our Top Charities excel on our criteria and they have clear plans for effectively using additional funding. They have strong organizational structures and they’ve demonstrated an interest in and capacity for evaluating their own programs. In order to provide a clear and memorable set of recommendations, we limit our number of Top Charities. If many groups perform well on all our criteria, we select only the best for our top recommendations.

Our Standout Charities are those we didn’t select for a top recommendation, but nonetheless want to call to the attention of our donors for their potentially high impact. Some performed well on all our criteria, but not quite as well as our Top Charities. Others excel on only some of our criteria. For instance, they might work on very effective programs but have trouble absorbing new funding. We hope that offering a broad array of Standout Charities will be helpful to donors who have slightly different perspectives than our own or are searching for an effective charity that works in a particular area. Additionally, donors who plan to make unusually large donations may find that some of our Standout Charities can use their funding more effectively than they could use many small donations. We encourage anyone who does donate to our Standout Charities because of our reviews to let us know so that we can track our impact.

For more information about the charities we recommend, see this blog post.

Important Considerations

A number of important questions came up in our discussions about which charities to recommend. We discussed the relative strengths and weaknesses between the charities we evaluated as well as Animal Charity Evaluators’ own impact and our role in the animal protection movement.

Some of the questions we discussed are the same ones we grapple with every year. For example:

- Is there an ideal number of Top and Standout Charities that we should aim to recommend such that we can provide donors with options but not overwhelm them?

- How much money do we expect to mobilize to our Top Charities next year, and how does that affect the number of Top Charities we should select?

- How should we account for charities’ impact when it is difficult to measure in terms of lives spared or years of suffering averted?

- How should we account for the potential long-term impact of organizations? In particular, how should we think about the cost-effectiveness of a charity that works in an area where we would expect progress to be slow, such as cultured meat development or advancing the interests of animals on a country’s political agenda?

Other questions cropped up for us this year as a result of recent changes in the animal advocacy field. For example:

- The Open Philanthropy Project (OPP) has recently been making large grants to animal charities. How should those grants affect our recommendations?2

- What are the consequences of choosing not to recommend a previously recommended charity?

- How important is having a long and successful organizational track record relative to our other criteria?

These questions do not have straightforward answers, and we continually work to improve our understanding of them. For more information about our thinking, please continue to check our blog for posts about our updated recommendations, aspects of our evaluation process that surprised us this year, our method for assessing the impact of charities’ social media presence, and a chart comparing our Top and Standout Charities.

Prior to 2016, we referred to exploratory reviews as “shallow reviews.”

We spent a particularly long time discussing this question. Important subquestions include:

- To what degree should the OPP’s grants be taken as indicators of charity effectiveness?

- Should we focus more on areas where the OPP has not yet made as many grants, such as veg outreach?

- If the OPP believes their grant fills a charity’s room for more funding, but the charity makes a case that they could use substantially more funding, what is the actual room for more funding?

- Would choosing not to recommend a charity because they received a large grant from the OPP disincentivize similar grants to animal charities in the future?

Recommended Charity Fund: January 2024 Update

Recommended Charity Fund: January 2024 Update Highlights From Our 2023 Reddit AMA

Highlights From Our 2023 Reddit AMA Announcing Our 2023 Charity Recommendations

Announcing Our 2023 Charity Recommendations

Leave a Reply